Imec has unveiled a significant advance in managing heat in next-generation 3D HBM-on-GPU architectures, showing that its system-technology co-optimization (STCO) approach can dramatically reduce GPU temperatures under AI training workloads. Presented this week at the 2025 IEEE International Electron Devices Meeting (IEDM) and explained in a release, the work demonstrates how cross-layer design strategies can bring peak heat levels in 3D-integrated compute platforms down from more than 140°C to roughly 70°C.

For eeNews Europe readers — many of whom are working at the intersection of advanced packaging, semiconductor design, and AI acceleration — these findings offer valuable insight into the viability of dense 3D architectures and the essential thermal strategies that will shape next-generation compute systems.

3D HBM-on-GPU: density vs. heat

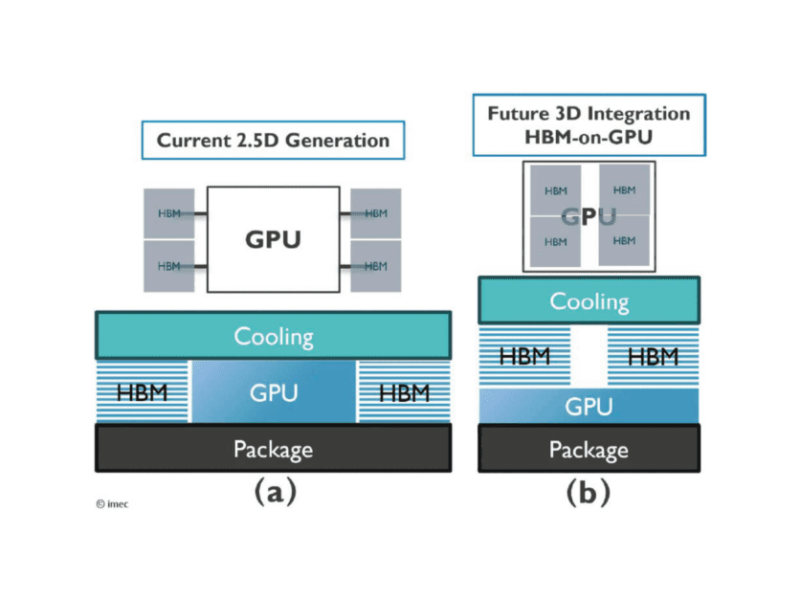

With AI models continuing to push the limits of memory bandwidth and compute throughput, engineers are exploring architectures that integrate high-bandwidth memory (HBM) directly on top of GPUs. Imec’s study focuses on a configuration with four GPUs per package and four HBM stacks — each comprising twelve hybrid-bonded DRAM dies — mounted vertically on the GPU using microbumps. Compared to today’s 2.5D approaches, where HBM stacks sit alongside GPUs on a silicon interposer, this 3D layout promises substantial gains in memory capacity and bandwidth, Imec notes.

However, these benefits come with steep thermal challenges. Vertical stacking increases local power density and thermal resistance, and initial simulations showed peak GPU temperatures reaching 141.7°C under realistic AI workloads — well above acceptable limits for both GPU and HBM operation. By contrast, a comparable 2.5D configuration under the same cooling conditions peaked at 69.1°C, Imec notes.

Co-optimizing tech and system-level thermal strategies

Imec’s research team approached the problem through STCO, jointly evaluating technology-level and system-level mitigation options in a comprehensive thermal model. The model applied industry-derived power maps to locate hotspots, serving as the baseline for optimization.

Technology-level levers included HBM stack merging and optimizing the thermal characteristics of silicon. At the system level, the researchers assessed measures such as double-sided cooling and GPU frequency scaling. According to imec, combining these strategies reduced peak temperatures from the unmanageable 141.7°C down to 70.8°C—bringing the 3D architecture in line with today’s practical 2.5D solutions.

Imec notes that “the result demonstrates the strength of combining cross-layer optimization (i.e., co-optimizing the knobs at all the different abstraction layers) with broad technological expertise, a combination that is unique to imec.”

The study marks the first full thermal STCO analysis of 3D HBM-on-GPU integration and underscores how coordinated engineering across packaging, device technology, and system design will be crucial for enabling the next wave of AI accelerators.

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News