From self-driving cars to smart glasses, technology matures at CES 2026

This year’s pageant of technology in Las Vegas is highlighting the shift of technology into self-driving cars, smart glasses and humanoid robots. These have been seen as technologies ‘of the future’ but are now rolling out in volume.

The Consumer Electronics Show (CES) 2026 saw the usual range of new TVs and PCs, including the first with Intel’s Core Ultra processors built on the 18A 1.8 micron process technology. But some key themes were strengthened, with significant moves in self-driving vehicles and humanoid robots, while the technology for smart glasses continues to bubble along in the background.

The numbers were up slightly on last year, with around 145,000 attending vs 142,465 in 2025, but down from the 171,000 in 2020 just before the Covid-19 pandemic broke in the West.

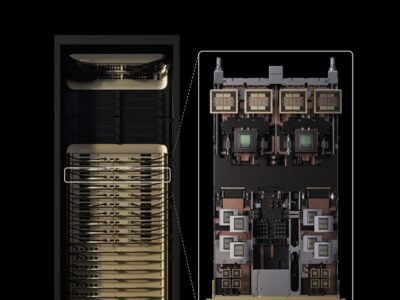

The move to autonomous vehicles was boosted by NVIDIA’s launch of a series of tools to help developers speed up their self-driving car programmes. The Alpamayo tools help with training end-to-end AI models in the cloud, while the latest version of the Hyperion Drive software will be used by Aeva, AUMOVIO, Astemo, Arbe, Bosch, Hesai, Magna, Omnivision, Quanta, Sony and the ZF Group for electronic control units (ECUs) for driverless cars.

“Everything that moves will eventually become autonomous, and DRIVE Hyperion is the backbone that makes that transition possible,” said Ali Kani, vice president of automotive at NVIDIA. “By unifying compute, sensors and safety into one open platform, we’re enabling our entire ecosystem, from automakers to the AV software ecosystem, to bring full autonomy to market faster, with the reliability and trust that mobility at scale demands.”

This version of Hyperion supports cross-domain control of braking, suspension, and steering to provide synchronized, low-latency actuation with Hyperion’s real-time, safety-certified platform. It uses two of NVIDIA’s Blackwell-based DRIVE AGX Thor embedded GPUs that support transformer‑based perception, vision language action models and generative AI workloads that can reason about complex driving scenes in real time.

By building domain controllers or qualifying sensors and other technologies on DRIVE Hyperion, partners gain seamless compatibility with NVIDIA’s full‑stack AV compute platform, speeding development, simplifying integration and accelerating time to market. The common compute and sensor foundation means partner companies can focus on differentiation at the higher software and service layers.

There were also some key autonomous vehicle deals that highlight the maturing of the technology. Uber signed a deal with car maker Lucid to use autonomous technology from Nuro for a fleet of self-driving taxis later this year. Uber is investing $300m in Lucid and will buy a fleet of 20,000 self-driving vehicles using the Nuro technology. This is part of plans for a fleet of 100,000 robotaxis around the world by the end of this year.

Uber partnered with the Chinese AI company Baidu to launch its Apollo Go autonomous vehicles on the Uber platform in markets outside the US and China, initially aiming at London, in the first half of 2026. It also has an ongoing partnership with Alphabet-backed Waymo in certain Austin, Texas, and Atlanta, Georgia.

Uber and Avride began offering robotaxi services in Dallas towards the end of 2025 and Uber also formed a strategic alliance with Pony.ai to launch robotaxis in the Middle East, starting with a pilot program that includes safety drivers. Additionally, Uber broadened its partnership and made investments in China’s WeRide to expand into new cities, notably in Abu Dhabi and Saudi Arabia.

May Mobility started to deploy thousands of autonomous vehicles through Uber in Arlington, Texas at the end of last year, and Uber entered an agreement with Chinese self-driving firm Momenta to roll out services in Europe, with first deployments anticipated in early 2026. European car maker Stellantis will use technology from Nvidia for driverless cars for Uber with production aimed for 2028.

Humanoid robots

Hyundai Motor Group (HMG) is using its automotive engineering skills to boost the production of its Atlas humanoid robots. The Group says it will use its global mass-production capabilities and a robust value chain across its affiliates to expand into logistics, energy, construction, and facility management sectors, through collaboration between its Boston Dynamics subsidiary and global AI companies such as Google-subsidiary DeepMind.

The Group’s manufacturing facilities are helping to develop reliable robots that are tested and trained internally. Hyundai Motor Company and Kia will provide manufacturing infrastructure, process control and large-scale production data while Boston Dynamics helps to develop high-performance actuators, standardizing key components and optimizing designs for manufacturability. Hyundai Glovis then optimizes logistics and supply chain management.

“By integrating all these areas of expertise, we can offer ongoing customer support after deployment, including regular software updates, hardware maintenance, repairs, and remote monitoring and control. These services enable us to oversee the process from start to finish, helping to bring AI robotics solutions to market,” said the company.

Atlas was created by Boston Dynamics as a product-ready robot designed for industrial work to work alongside humans, especially in dangerous or challenging environments. These will be trained at the Robot Metaplant Application Centre (RMAC) in the US which will open later this year.

NEURA Robotics in Germany also showed its next generation of its humanoid robot, 4NE1, designed in collaboration with Studio F.A. Porsche, the designers behind the Porsche 911.

“The world is preparing for robots, but robots also need a world that is prepared for them. With the Neuraverse, we’re building the invisible engine that connects, empowers, and scales intelligence across every device and environment,” said David Reger, Founder and CEO of NEURA Robotics in Metzingen.

The 4NE1 humanoid robot launched by NEURA Robotics

Most robots work independently with siloed data. The company’s Neuraverse operating system connects them on a shared platform, allowing robots to instantly share knowledge and accelerate collective learning. The software is open for early-access partners to let developers share, monetize, and deploy robotic skills with unified data and AI models. This takes advantage of the network effects, where the more participants there are, the faster everyone learns.

NEURA also launched a four-legged explorer built for challenging terrain and complex environments. It is engineered for multimodal cognitive interaction and fully autonomous operation. Less than a meter in height and with a 20kg payload, the robot has 360° environment vision and of course the Neuraverse connectivity.

A smaller version of the 4NE1 is only 20cm high and combines natural language interaction, computer vision, and reinforcement learning with multi-language voice recognition and human detection safety features.

All the robots are powered by NVIDIA Isaac GR00T, an open foundation model for humanoid robot reasoning and skills and simulated using NVIDIA Isaac Lab and Isaac Sim, open-source frameworks for robot learning and simulation.

Other humanoid robots showcased at the event include the G1, H2, and R1 developed by Unitree Robotics, a prominent Chinese company, while Shenzhen-based EngineAI showed its T800 humanoid robot. Named after the original Terminator robot, the T800 is equipped with a fully-integrated, high-torque joint module architecture capable of delivering up to 450 Nm of peak torque and 14,000 W of instantaneous joint peak power. Combined with high-degree-of-freedom joint structures in key areas such as the neck, waist, and hands, this provides a high level of anthropomorphic mobility. In high-dynamic scenarios like martial arts and running, it delivers industry-leading dynamic output and load-handling capability.

LG also showed its CLOiD robot, which is designed to perform household tasks such as folding laundry, loading dishwashers, and preparing food using conventional appliances.

The robot incorporates a wheeled base to enhance stability and features a torso with adjustable height, enabling interaction with countertops and cabinets. Each of its dual arms provides seven degrees of freedom and is equipped with five digits for precise object manipulation.

Smart glasses

In the meantime, the technology for smart glasses is also on show at the exhibition with several new designs pushing the boundaries of the wearable technology.

TDK spun off low power AI vision chip technology as AI Sight, with a reference design with a wide range of other TDK components. At the same time, Vuzix showed its waveguide technology integrated with display projectors from partners that include Avegant, Himax, Hongshi, JBD, and Saphlux. Paired with cutting-edge display engines, from microLEDs to ultra-compact, full-color LCoS projectors, as well as a novel laser-LCoS projector from partner Vitrealab, these are used for the next generation of lightweight, AI-powered wearable devices.

Also shown was a jointly developed binocular smart glasses reference design available to be manufactured by Quanta Computer for OEMs. This pairs Vuzix’ waveguides with an Avegant full-colour LCoS light engine to deliver bright, crisp visuals in a discreet binocular form factor. This also adopts a prescription lens system that supports users with or without vision correction, tackling one of the key challenges for a wide range of potential smart glasses users.

Tokyo-based startup Cellid is using Foxconn subsidiaries Jorjin Technologies and GIS (General Interface Solution) for the development and manufacturing of its HJ1 AI smart glasses. This weighs 46g by using Cellid’s ultra-thin, high-brightness waveguide technology and precision optical and microLED display by GIS while the product design, system development, and manufacturing is handled by Jorjin Technologies. It has a built-in camera, microphone, and speaker enable audio, video, and AI running on ARM’s Cortex-A32 and M55 processor cores.

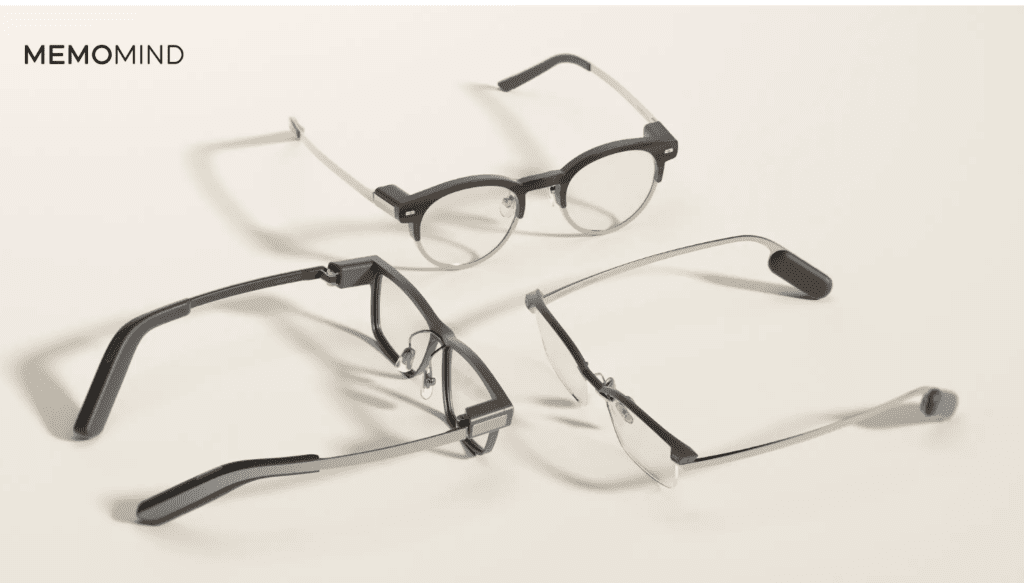

Chinese projector maker XGIMI launched into the smart glasses market at the show with three ranges. The Memo Air Display AI glasses weigh 28.9g and have eight frame styles and five interchangeable temple designs, along with full prescription-lens support. This modular approach allows users to tailor their glasses to their style, needs, and routines, which is not possible with many other versions. The Memo Air has a display for a single eye and no speakers, while the heavier (45g) Memo One has dual displays and integrated speakers, indicating the weight of the light engine and speakers.

The Memomind smart glasses from XGIMI

“Why choose AI glasses?” said Apollo Zhong, XGIMI Founder and lead investor of MemoMind. “Glasses are the most natural, lowest-friction form factor for intelligence. They fit into people’s lives without requiring new habits. We didn’t choose AI glasses—this is simply the form that makes the most sense for delivering real, everyday intelligence.”

TriLite in Vienna, Austria, showed its Trixel 3 Cube, an ultra-compact, cube-shaped laser beam scanning (LBS) projector developed to simplify system integration and support scalable manufacturing. By eliminating the need for relay optics, the Cube reduces overall display volume and enables greater freedom in industrial design, key requirements for wearable AR devices. While the cube is aimed primarily at consumer smart glasses, it is also being evaluated for automotive display applications, including head-up displays and free-space projection.

“Automakers are increasingly seeking advancements in Augmented Reality Head-Up Displays (AR HUDs). TriLite is addressing key limitations of conventional HUD technologies, including the size and inflexibility of TFT-based systems and the optical complexity and efficiency challenges associated with DLP solutions,” said Kevin Mak, Principal Analyst for Automotive Market Analysis at TechInsights.

LG also showed a concept for AI glasses that links wirelessly to a smart device to act as display. The glasses weigh just 45g and have a battery life of 8 hours, the glasses can be used as a teleprompter for presentations and speaking engagements and sub-millisecond live translation and intelligent image recognition. This is powered by Lenovo Qira, an AI agent that runs across all LG devices, from PCs to Motorola phones.

Edge AI microcontrollers

Other notable developments at the show include an edge AI wireless microcontroller from Nordic Semiconductor and a sub-threshold neural processor from Ambiq Micro.

Nordic’s nRF54L Series system on chip integrates the Axon neural processing unit (NPU) from its acquisition of Atlazo in 2023.

The nRF54LM20B SoC is the first large-memory member of the nRF54L Series, integrating the Axon Neural Processing Unit (NPU). This provides up to 7 times faster performance and up to 8 times higher energy efficiency versus competing solutions for tasks such as sound classification, keyword spotting, and image-based detection says the company.

The nRF54LM20B SoC pairs the Axon NPU with 2 MB NVM, 512 KB RAM, a 128 MHz ARM Cortex-M33 plus RISC-V coprocessor, high-speed USB, up to 66 GPIOs, and Nordic’s fourth-generation ultra-low-power 2.4 GHz radio supporting Bluetooth LE, Bluetooth Channel Sounding and Matter over Thread.

The Axon runs small Neuton edge AI models that are typically under 5 KB and up to 10 times smaller, faster, and more efficient than other CPU-run models. Nordic Edge AI Lab helps developers generate custom Neuton models for anomaly detection, activity and gesture recognition, biometric monitoring, and more to provide real-time intelligence on tiny batteries and constrained memory, without cloud dependency.

“With Nordic Edge AI Lab, Neuton models, and the Axon NPU, Nordic makes advanced on-device AI practical for every embedded developer,” said Oyvind Strom, EVP Short-Range BU at Nordic Semiconductor. “Developers get the simplicity to move fast, and the disruptive performance to scale from wearables to industrial sensing – all enabled within Nordic’s trusted ultra-low-power hardware solutions.”

For smart glasses and other applications, Ambiq has developed a version of ARM’s Ethos U-85 neural processing unit using its ultra-low power Subthreshold Power Optimized Technology (SPOT) platform. Atomiq is the first SoC to leverage sub- and near-threshold voltage operation for AI acceleration with over 200 GOPS of AI Performance. The platform also includes dynamic voltage and frequency scaling (DVFS) with a wide range that enables operation at lower voltage and lower power than previously possible, making room in the power budget for unprecedented levels of intelligence.

This works with Ambiq’s Helia AI platform, AI development kits (ADK) and the modular neuralSPOT SDK, delivers a tightly integrated hardware-software stack that is designed to work in a smaller memory footprint at much lower power for AI cameras, smart glasses and industrial robotics.

“The Atomiq product family represents a step-change in energy-efficient edge AI,” says CTO and Founder, Scott Hanson. “By combining the Atomiq system architecture with Arm’s Ethos-U85, we enable significantly larger AI models at the edge with industry-leading energy efficiency, powered by our SPOT platform. Together, these capabilities form the foundation for true ambient intelligence.”

“From smart cameras to wearables, edge devices now require increasing levels of AI performance within extreme power and thermal constraints,” said Lionel Belnet, senior director of hardware product management, Edge AI Business Unit at Arm. “Built on the Arm compute platform, Ambiq’s Atomiq SoC shows what’s possible when AI acceleration is designed specifically for the edge, enabling richer audio, vision, and reasoning directly on-device for a new class of intelligent, battery-powered edge systems.”

www.nvidia.com; www.ambiq.com; www.trilite.com; www.nordicsemi.com; www.hyundaimotorgroup.com; www.memo-mind.com

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News