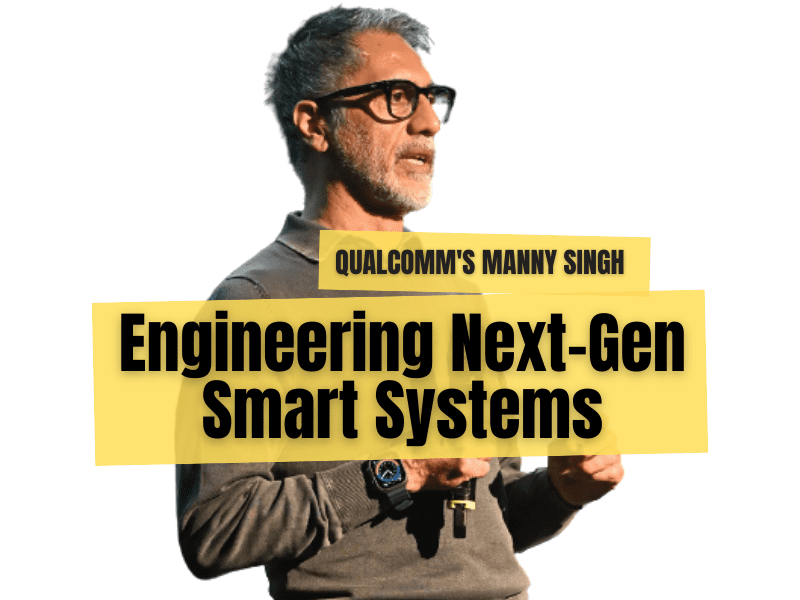

Engineering the next generation of smart systems: Q&A with Manvinder “Manny” Singh

For engineers building the next generation of smart devices, edge AI is opening powerful new possibilities. Manvinder “Manny” Singh, VP of Product Management at Qualcomm Technologies, talks about how the company is simplifying machine learning development, empowering embedded engineers with better tools, and driving a new era of on-device intelligence through its partnership with Edge Impulse.

Abate: You’ve been working at Qualcomm since 1996. When did AI start becoming a strategic topic at Qualcomm?

Manvinder “Manny” Singh: AI began gaining strategic traction 10+ years ago, particularly as we recognized the potential of on-device intelligence to transform mobile device experiences. Qualcomm Technologies began embedding AI capabilities into its Snapdragon® mobile platforms nearly a decade ago. This included the development of the Qualcomm® Hexagon™ DSP, Qualcomm® Adreno™ GPU, and Qualcomm® Kryo™ CPU, which together formed a heterogeneous computing architecture optimized for AI workloads. These components enabled real-time inferencing for tasks like computational photography and voice recognition, laying the foundation for our edge AI strategy. Subsequent developments in neural network accelerator started to converge with our leadership in low-power compute, making AI at the edge a natural evolution.

Abate: When did Edge Impulse land on your radar?

Singh: Qualcomm Technologies’ diverse portfolio of SoCs, from entry-level to high-performance, posed a challenge in delivering a unified AI development experience. Developers struggled with inconsistent tooling, lack of abstraction layers, and limited support for hardware-aware model optimization. The internal assessment emphasized the need for an end-to-end MLOps platform that could simplify AI development across all tiers of Qualcomm Technologies silicon. Edge Impulse came onto our radar more than a year ago as we saw growing developer momentum around their platform. Qualcomm Technologies’ internal platforms did not offer comprehensive tools for data collection, labeling, and transformation, critical steps in building edge ML models. Edge Impulse addressed this. Their focus on simplifying ML development for embedded devices aligned perfectly with our vision of democratizing edge AI.

Abate: Can you describe Qualcomm’s long-term vision for AI at the edge and how Edge Impulse fits into that strategy?

Singh: Our long-term vision for edge AI is centered on enabling intelligent, autonomous systems across industrial, consumer, and enterprise domains. Edge Impulse accelerates this by providing a developer-friendly platform that bridges data collection, model training, and deployment on devices powered by Qualcomm Technologies.

Abate: With Qualcomm’s acquisition of Edge Impulse, how is the end-to-end ML workflow improved for developers, from data acquisition to deployment and model monitoring?

Singh: Edge Impulse offers a single, intuitive interface that supports direct data ingestion from Qualcomm Technologies dev boards, assisted labeling and synthetic data generation, signal processing, model training, automatic model tuning for performance, power, and memory constraints, and seamless deployment across our SoCs, all without requiring ML expertise. Developers can now go from concept to deployment in days instead of months, thanks to pre-integrated dev kits, built-in support for various model architectures, and access to GPU training. It also reinforces the shift from a product-centric to a platform-first strategy, making Qualcomm Technologies more relevant to long-tail developers and ecosystem partners.

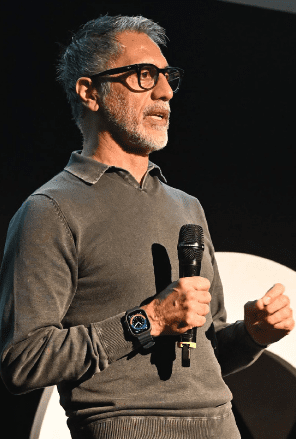

Manvinder “Manny”

Singh (Source: Qualcomm)

Abate: How do you envision the role of AI evolving in industrial IoT over the next three to five years?

Singh: AI will become the nervous system of industrial IoT, enabling predictive maintenance, adaptive control, and real-time decision-making. We’ll see a shift from reactive systems to proactive, self-optimizing operations that reduce downtime and improve safety.

Abate: With edge AI becoming more prevalent, what are the biggest technical challenges you see for real-time inference on constrained devices?

Singh: The key challenges are balancing compute performance with power efficiency, ensuring model robustness in noisy environments, and enabling secure, low-latency inference. Running AI models on constrained edge devices requires aggressive optimization, quantization, pruning, and hardware acceleration, which are technically demanding and error-prone without proper tooling. Developers face a fragmented landscape of SDKs, compilers, and runtime environments. Each hardware platform may require unique model formats, custom deployment pipelines, manual tuning for memory and latency that slows down the development and increases the learning curve. Without structured MLOps support, developers struggle to maintain model performance over time, especially in industrial environments where reliability is critical. We’re addressing these through dedicated AI accelerators and software toolchains optimized for edge deployment.

Abate: How close are we to seeing fully autonomous IoT systems powered entirely by edge AI, and what would that mean for industrial and consumer applications?

Singh: We’re closer than many think. Autonomous edge AI is already transforming industrial IoT: predictive maintenance, anomaly detection, and energy optimization in factories and smart grids, retail: cashier-less stores, shelf-edge intelligence, and real-time inventory tracking, healthcare: wearables that monitor vitals and detect emergencies autonomously, and robotics and drones: visual inspection, navigation, and task execution without human intervention, etc. Full autonomy will unlock new levels of efficiency, safety, and personalization without relying on cloud connectivity.

Abate: Could you envision a future where AI at the edge anticipates problems or opportunities before humans even recognize them?

Singh: Absolutely. That’s the promise of predictive AI. With enough contextual data and real-time processing, edge AI can detect anomalies, forecast failures, or even suggest optimizations — empowering humans to focus on higher-level decision-making.

Abate: If you were to predict the “next frontier” for AI at the edge, what applications or scenarios are most likely to surprise the industry and developers alike.

Singh: I believe we’ll see breakthroughs in edge AI for environmental sensing, personalized healthcare, and autonomous robotics. The combination of multimodal sensing and real-time inference will enable applications we haven’t even imagined yet. Generative AI is rapidly emerging as a transformative force in edge computing, unlocking new possibilities for autonomous systems, industrial IoT, and personalized user experiences. Qualcomm Technologies is at the forefront of this shift, pioneering on-device GenAI capabilities that are redefining what edge devices can do. Our hybrid edge-cloud architecture balances performance, privacy, and scalability that is ideal for enterprise use cases, for example, worker assistance: chatbots and Q&A systems, real-time translation, summarization, and document generation, etc.

Abate: If you could give one piece of advice to engineers and developers preparing for the future of AI at the edge, what would it be?

Singh: Focus on understanding the full stack — from sensor data to model deployment. The most impactful solutions will come from those who can bridge hardware constraints with software innovation and user-centric design.

Editor’s Note: This interview first appeared in the 2025 Edge Impulse guest-edited edition of Elektor. eeNews Europe is an Elektor International Media publication.

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News