Edge Impulse’s Jan Jongboom on scaling AI to the smallest devices

How do you go from tracking sheep in the Norwegian wilderness to co-founding one of the leading platforms for edge machine learning? In this in-depth conversation, Jan Jongboom shares the surprising origins of his AI journey, the pivotal moments that sparked the launch of Edge Impulse, and the lessons learned while building a thriving developer community. Along the way, he reflects on unexpected use cases, busts myths about deploying AI at the edge, and looks ahead to the future of embedded intelligence in the Qualcomm era.

Abate: When did you first become exposed to artificial intelligence, and what led you to focus on it?

Jan Jongboom: In 2015 I was working at Telenor (big Norwegian telco), and we were asked whether we could come up with a system to help track whether a sheep was active or not for a use case with Telespor. Many of the sheep in Norway just roam around in the summer, and if a bear (or other wild animal) attacks them, you would want to know that quickly — so adding some activity recognition to the Telespor trackers would help with that.

A colleague figured that we could use some ML on accelerometer data to track that; so over two days during a hackathon, we improvised a system with a mobile phone to collect live data, had colleagues doing various activities around the hackathon space, hacked together a rudimentary ML model, and then had a computer to run inference to detect different activities. This whole setup was very clunky (and didn’t generalize super well), but it did show that we could detect some basic movements from the accelerometer (video still online) — which was super cool.

So, this has always been in the back of my mind as I was starting to do more IoT work. There’s stuff that’s very hard to program out in a control loop as humans (detecting different movements on accelerometer data), but ML could theoretically figure out relations in that data quickly. However, in my mind, ML was associated with lots of compute (we needed a Macbook to run the ML model back in 2015); and I never imagined we could scale it down to something that could run on the device that gathers data itself.

Fast forward to late 2017. I was in Taipei and I grabbed a drink with Neil Tan, who was a marketing engineer in the office there, and he explained something that I completely missed so far: a neural network is just a bunch of math operations, and microcontrollers are pretty good at math. So just make the network small enough, be smart about paging in/out the numbers that you need, and bam — you can run ML models on the smallest of devices. Neil didn’t just talk about that, he prototyped it out with uTensor — the first neural network runtime for MCUs.

This was absolutely mind-blowing to me, and that evening I posted the uTensor GitHub page on Hacker News. Next morning, Neil wakes up to being no.1 on Hacker News and 600 new GitHub stars. I very quickly hired Neil into my team, we incubated uTensor as an official labs project, and eventually merged our efforts with Google, where they were building TensorFlow Lite for Microcontrollers.

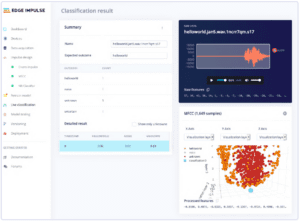

Inspecting a misclassified label

Abate: Take us back in time. How did you end up launching Edge Impulse?

Jongboom: In 2019 all the building blocks for on-device ML seemed to be in place. So Zach Shelby and I got talking, and we figured that to build real embedded ML applications, you need to build so much more than just a runtime. You need data collection from real devices, DSP algorithms, ML building blocks, example firmware, device drivers that hook into the models, etc. None of that existed, let alone in an easy-to-use package. So, we decided to build an end-to-end platform for on device ML: Edge Impulse.

We engaged with our first customer around November 2019 (Oura), and went live for the community in January 2020 during The Things Conference in Amsterdam. As an homage to the 2015 demo, we dressed up Johan Stokking (CTO of The Things Industries) as a sheep, and recreated the Telespor demo, now running fully on device! You can watch the video here.

Abate: What were some key challenges during your early growth phase, and how did partners or early adopters influence your trajectory?

Jongboom: “Build it and they’ll come” is BS. It requires a ton of work to get the word out on anything new, and even more work to build a lasting community. Fortunately, both Zach and I had experience building developer communities earlier. Zach launched and ran micro:bit a few years prior, and I ran the developer relations team for an IoT group and worked on Firefox OS and Cloud9 IDE before, which had a big developer community component.

In the early days, we tried to plug Edge Impulse wherever we could, and sort of expected big boosts in signups from that, but that never happens — it’s all a slow burn. Some things we’ve done: we collaborated early on with Hackster to get announcements and projects out (including a launch date post), we were on stage a lot (whoever wanted to have us on their conference), we wrote lots and lots of content trying to hit the Hacker News frontpage, we collaborated with silicon vendors on content/events, we created two courses on Coursera (which 50K people have done now!), and we sponsored two of our folks to write a book for O’Reilly. None of these caused an immediate spike in user signups, but it builds up. Everything you do is cumulative here, and at some point you have such a big presence that every day new folks will find you just through all the stuff you’ve done in the past.

One thing we did do well (in my opinion) early on was treating our users very nicely. Be open and transparent, own up to mistakes, answer questions on the forum quickly, etc. Here’s a quote from our “tech philosophy” document we had all new hires read: “If someone has gone through the trouble of finding us, signing up, trying something, and then asking a question on the forum — we should treat them as royalty. This does not just go for immediate commercial prospects. The makers of today are the professionals of the future.”

Abate: Was your vision always focused on edge ML, or did that emerge after experimenting with various AI deployment challenges?

Jongboom: No, we focused on exactly that from the beginning and haven’t diverged from it. Our original vision was “Machine learning at the edge will enable new value for business and society, making efficient use of device data by millions of developers on 100s of billions of devices” — which Zach and I penned down over sushi in Capitola a few months before starting the company. That hasn’t changed a bit since we started the company (and fully aligns with Qualcomm’s vision for the industry). You see that even in our product, if you look at videos from the earliest prototypes (e.g., two months in), the product still resembles what we have today a lot. (If you ever meet me in person, I’ll show the video in exchange for a beer!)

Abate: What do you believe makes a great developer experience in embedded AI? How do you ensure your platform delivers that?

Jongboom: Oh, do you have a minute. So, first and foremost. Users don’t come to you to do embedded AI — they come to you because they have a problem that they want to solve, and they think AI can help them with that. Pretty simple. So the faster you can get them on that track to solve the problem the better. On the community side, we try to do that with great tutorials as well as offering base building blocks that lots of people need (e.g., a visual anomaly detection block or a PPG->HRV block). On the customer side, we do this with a solutions engineering team that works closely with the customer (if needed).

Then, on how the platform does that… First, writing reusable embedded source code is hard… On the embedded side, we wanted to deliver source code (instead of compiled libraries), not have any external dependencies, and require as little flags as possible, so everyone could just drop the generated model and our SDK into a firmware project, and it should work on anything under the sun (as long as it has a C++11 compiler). That resolves a lot of problems. Hardware acceleration we layered in, but we always have software fallback. This has gotten more complex, as we have NPUs we need to support (which require compiling your neural network to something the NPU can run), and as we now support so many distinct compute targets — but the core principles remain.

On the platform side we really try to do everything end-to-end. A user should come in, and be able to build a project from start to finish. That’s from first data collection to having a model run on a device. For a long time I also refused to have pretrained models/prebuilt datasets on the platform, as I believe that you only see the power of ML when you apply it on your own data — again, we’re loosening that a little as we’re getting bigger. The hard part with doing everything end-to-end is that people will eventually want to do something that’s not supported. Building good on- and off-ramps to take stuff out of the platform, and then back in is super hard. Some parts we’ve done a really good job I think, for example, it’s very easy to bring in a new ML architecture, and we have APIs for literally everything in the platform. But other parts we still need to improve.

Using your own tools a lot helps too. Back in the day, I helped customers every day — that teaches you a thing or two about how people use your platform. These days we have a pretty sizeable solutions team that works with customers, and we sync to them often.

Abate: Have any unexpected use cases emerged that surprised you or shifted your roadmap?

Jongboom: When we started Edge Impulse, we figured the major chunk of our customers would be in industrial IoT. But instead, we landed our first customer in consumer health (Oura), and that is still a huge vertical for us. I knew nothing about this when we started. I flew to Singapore with the Oura team to help set up a sleep study in a university hospital, and got a crash course in what’s required for clinical data collection. All the stuff that I learned there on data sanity, working with labs, logging, traces, data quality, data syncing, etc., has driven a huge part of our roadmap over the years — and we continue to work with some of the biggest names in consumer health and fitness.

Elektor: How do you see emerging trends like tinyML, neuromorphic computing, or on-device generative AI affecting the platform’s future?

Jongboom: GenAI is super interesting. LLMs are fantastic because they’re zero-shot. You don’t need to train them, just give a prompt and now they’re a classifier (“person wears personal protective gear y/n”). Super powerful — not just in the field, but also to very quickly label data. GenAI is also fantastic for synthetic data; I can ask the machine to say “Hey Elektor” 100× and a minute later I have a nice dataset to train a keyword spotting model on. We’ve integrated LLM based labeling and GenAI Synthetic Data in the platform last year already, and we see very good adoption of both features.

Then devices also get faster and faster (and models smaller and smaller), so we can now run multi-modal LLMs on IoT hardware (we showed one running on a Qualcomm DragonwingTM IQ9 Series platform during Embedded World). These models are still not as good as their cloud counterparts, and they’re quite slow (not good for real-time analysis), but they still open up so many other new possibilities, like model cascades. This is where a small, very fast model checks what’s happening, then cascades to a big model to do analysis (which might take long or lots of energy, could even be in the cloud). And all of this just started. I can’t wait to see what’ll happen in the next five years in this space.

Jan Jongboom

Abate: What are the biggest myths or misunderstandings about deploying AI at the edge that you wish more people understood?

Jongboom: It comes in phases. The first hurdle people need to go over is that edge AI is nothing more than a fancy way of writing your control loop. There are inputs, there’s an output. You can write the rules of how to map input to output in the middle yourself using code, or you can let the computer create an algorithm using AI. AI models in general are just much better at uncovering complex relationships than you can do by hand. (Try writing some code that detects an elephant trumpeting by looking at a WAV file — it’s hard!) But in the end, however, the outcome is exactly the same: it’s a bunch of math that gets compiled to machine code — nothing more, nothing less.

The second one is that it’s still engineering. It’s great that you can throw an image at ChatGPT and ask “is this person wearing protective equipment?” and get an answer that is correct for that picture — but does that give you enough trust to deploy that model in a real factory? And building real applications requires systems engineering: power management, latency requirements, no unlimited RF budgets. Because it’s possible to get to 80% of a solution so quickly now (“look the model works!”) people might forget that the remaining 20% takes 90% of the work (hardware is hard!).

Abate: Now that Edge Impulse is part of Qualcomm, how will the developer community benefit from expanded resources, platforms, and reach?

Jongboom: We can do a lot more, a lot faster. On the free Developer tier, we’ve upped compute limits, enabled GPUs for everyone, and now expose Edge Impulse-created model architectures like FOMO-AD (visual anomaly detection) that were previously only available for paid customers.

And Qualcomm Technologies has some very interesting, super-powerful hardware coming (e.g., Dragonwing IQ9) that lets us run LLMs on the edge for the first time; so we’ll be coming out with full LLM support in Edge Impulse Studio soon, plus the ability to define model cascades. That’s super exciting for me, as it lets you model a complete system — rather than just a model.

Last, as part of Qualcomm, we remain committed to supporting the complete IoT ecosystem, and you can build models that collect data and run on almost any hardware (We have 131 distinct deployment targets today!) — that doesn’t change, and we’ll keep adding support for new devices, processors, and neural accelerators for other vendors.

Editor’s Notes: Qualcomm-branded products are products of Qualcomm Technologies, Inc. and/or its subsidiaries. This interview first appeared in the 2025 Edge Impulse Guest-Edited edition of Elektor. eeNews Europe is an Elektor International Media publication.

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News